During my watch of the “Deep Learning” episode of South Park, I, shockingly, did some deep learning. The episode making social commentary on the use of AI in schools was nothing new, but when Stan Marsh started using AI to text his girlfriend, Wendy Testaburger, my jaw all but smacked the floor. AI was being used as a substitute for a genuine human connection.

When we send texts, our brains consider a myriad of questions: What is my relationship to who I’m texting? What do I want to say? How much should I disclose? How long should my response be?

What may be compared to writing an email, a seemingly insignificant and arguably mundane task, is actually imperative to our cognitive functioning. Our cognitive abilities erode when we stop considering these questions. What takes its place is that tip of your tongue feeling — an answer you know you have but can’t retrieve. The harder these thoughts become to retrieve, the more reliant we become on AI technology to retrieve them for us. People may lose the ability to understand things if they stop thinking critically and connecting new ideas to prior knowledge. AI wedges this mental block so that we become passive characters in our own lives. Relying on AI to sustain and curate relationships, especially romantic ones, will lead to an apathy catastrophe.

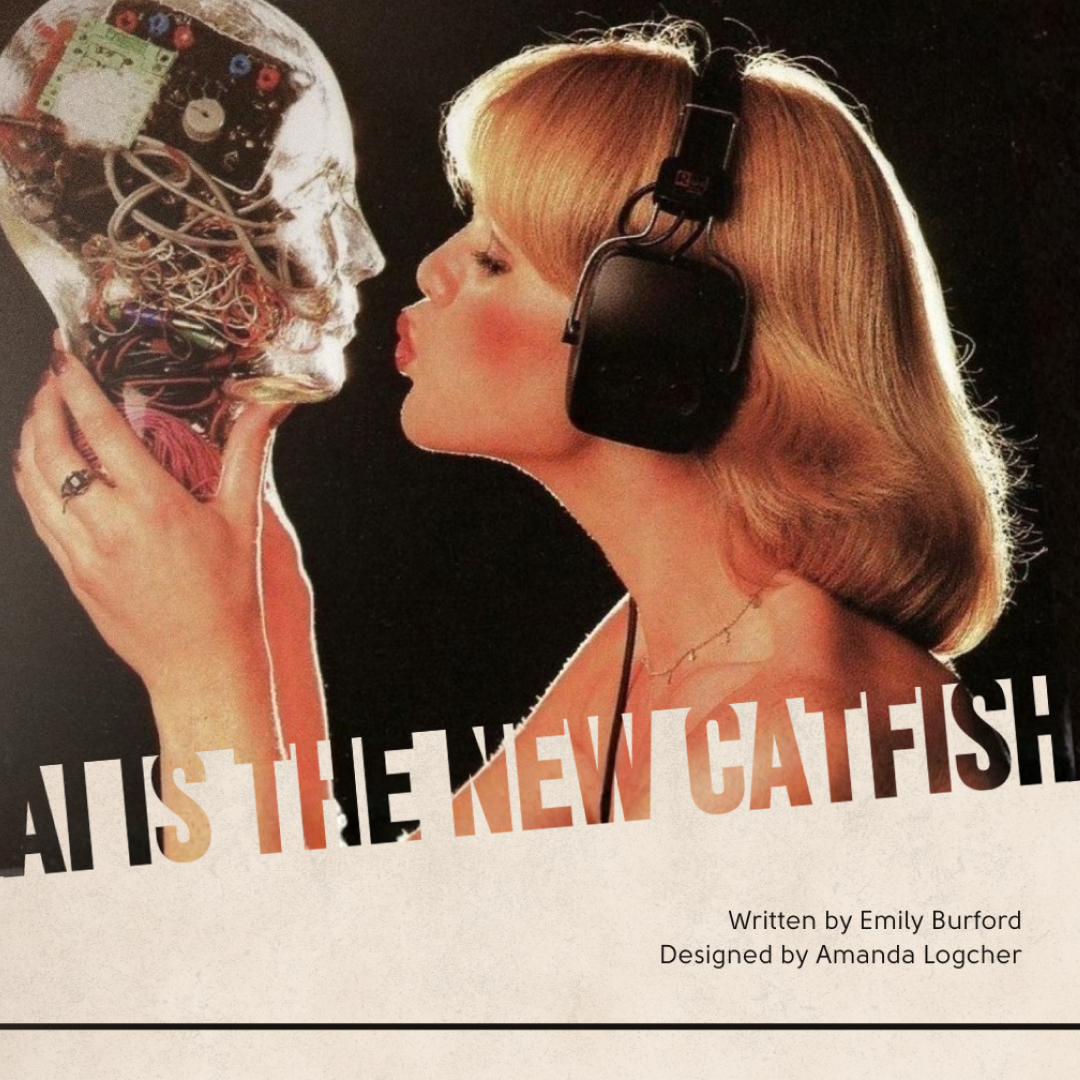

Living in a digital age where it is easy to doctor images on online dating sites, AI has made it increasingly harder to detect catfishing — giving people the means to doctor their personalities too. Users can ask the technology to match the texting style of their romantic interest or even express interest in the same topics as them, all in an effort to be more appealing. This appeal is misdirectional, leading people to think they’re compatible, when they’re actually just compatible with the robot regurgitating information about their own interests. Just like in Penny Reid’s “Dating-ish”, where a perfect robot gets created for companionship, the veil of perfection falls because people don’t actually want perfection — they want authentic. In a world where everyone is the perfect match on paper, romantic experiences become dull and people begin to lose sight of their true selves. How can you know who you are or what you like when your identity becomes tied to performing for others? A world without heartbreak is a world without love and without creativity. If you can’t weed out the wrong matches, then you can’t find fulfillment in the right ones; emotions are muted when there’s only one to feel.

Though this numbness is very plausible, there is also a hidden opportunity for those who struggle navigating modern-day connections. AI gives people who are more socially awkward a leg up in the dating world. Struggling to grapple with the many social facets of texting like tone, cultural references and punctuation, AI relieves the burden by remaining socially relevant. Stress can interrupt text exchanges, giving leeway for more awkwardness to emerge between conversations. An in-person experience, such as a date, alleviates some of this stress because it provides individuals with more direct social cues for comfortability, an advantage only accessible through this cheated texting method. While there are benefits to this, social awkwardness is not a new hurdle. For years, people have had to manage social awkwardness, which is in its own right, a form of authenticity. Social awkwardness doesn’t go away in-person, it gets exacerbated. A socially obtuse person’s awkwardness is part of who they are and will dictate how they express themselves. Their partner finding out is inevitable. Using a chatbot to text does not change this fact, it just prolongs it.

AI can aid with more than text messages, from planning date nights down to the perfume you should wear. But, using AI to govern decisions about dating itinerary and perfume erases all of the good-stress elements needed to build tension leading up to a date. There becomes a large disconnect when the activities that are supposed to be meaningful become impersonal and lackluster. The thrill derived from it is in ambiguity and surprise. Without it, dating becomes procedural and emotionally numbing.

From here, AI can insert itself as a third-party member in relationships. When disagreements arise, the tool can be utilized as a sort of step-in therapist. People will turn to AI to gain perspective, but the only perspective they’ll be hearing is their own. The chatbot is an echo chamber, recognizing question bias and programmed to favor its user. It is not capable of being an objective third party member. AI doesn’t just pick up on user bias, it also comes with its own. The technology is layered with data-driven racism, sexism, homophobia, transphobia and more. Thus, the technology may subtly answer in unjust, problematic ways. So, ladies, if your boyfriend decides to inquire about a fight you’ve had, the chatbot may tell him it’s your menstrual cycle.

You might be wondering, how is this any different than turning to friends for advice or a quick rant?

Unlike AI, which only knows one side of you, your friends have witnessed your behaviors firsthand — equipping them with a deeper understanding of the context behind your behavioral patterns. The machine only knows what it’s told, and especially in disagreement, you want it to know how you’ve been wronged.

In a study evaluating the effectiveness of AI generated emails, AI failed to correctly identify emotional context. AI may be beneficial for increasing efficiency in the workplace, but using AI for relationships will only lead to one place — dystopia. If we rely on this technology to foster connection in romantic relationships, we may end up like Stan Marsh — sitting across from our significant other, sharing a plate of fries, trying to recall the details of a boating accident, AI said, left us traumatized.